An Unreal MML Experiment

Hello hello!

So, I've been working on a bit of a personal project, and I think it is perhaps far enough along to say something about. It's still incredibly early, but these are the barest babiest bones of a rhythm game. The distinction from most others is that it's also synthesizing the music live at runtime.

Briefly, there's a text-based music notation system called MML, or Music Macro Language. It's relatively uncommon, but I think it's a neat way of encoding and playing back songs. A typical string might look like this:

ccggaag2 ffeeddc2 ggffeed2 ggffeed2 ccggaag2 ffeeddc2

Twinkle Twinkle Little Star, for reference.

A B C D E F G are parsed as notes, optionally followed by a number parsed as duration. There's typically a default octave and a default duration, so if those values are skipped it falls back to default settings.

I picked up A tiny MML parser as a starting point, and modified it to get it to play nice with Unreal; it's written in C, so it wasn't a huge hassle to get integrated thankfully. But, that only does the parsing piece, the reading the text and deciding what it means, it doesn't actually play anything on its own. Unreal has this MetaSounds system that basically works like a music synthesizer, you can feed it variables, like frequency and time, in order to adjust playback.

Initially, I wanted to see if I could get variable specific music notes playing at all, so I set up a data table that mapped particular notes to their frequencies, then would randomly grab one of them and play it.

But this wasn't actually connected to the parser at all, so the next piece was getting a given string from the parser down to the metasound.

This gets us to one note! The same note, but still a note! I also had to figure out how to play particular lengths, and get that to matter somehow. There wasn't exactly a specific "play this metasound for x amount of time" built in, so I made one.

Getting from here to a full mml string was a bit tricker. The parser would return its results pretty much immediately all at once, so you'd have this chord-like effect, which is sometimes cool, but not if you're trying to play a whole song over time.

To handle this I build a note schedule, which would take a parsed note and then add it to the schedule, take the scheduled note's length, and then adjust where in the schedule to write to next.

At this point I realized my playback logic was kind of bad. Notes were being played multiple times from the schedule, which, wasn't quite what I was going for. I was using a Quartz clock to handle when to play back notes, but didn't do any removal along the way of played back notes. After fixing that, we have something that almost sounds like music:

But notes and note lengths are only part of MML, we'd also like the ability to adjust the playback BPM, and octave shifts. Quartz mostly handles BPM for us, plus some modifications to the note lengths:

Octave adjustments are also relatively straightforward:

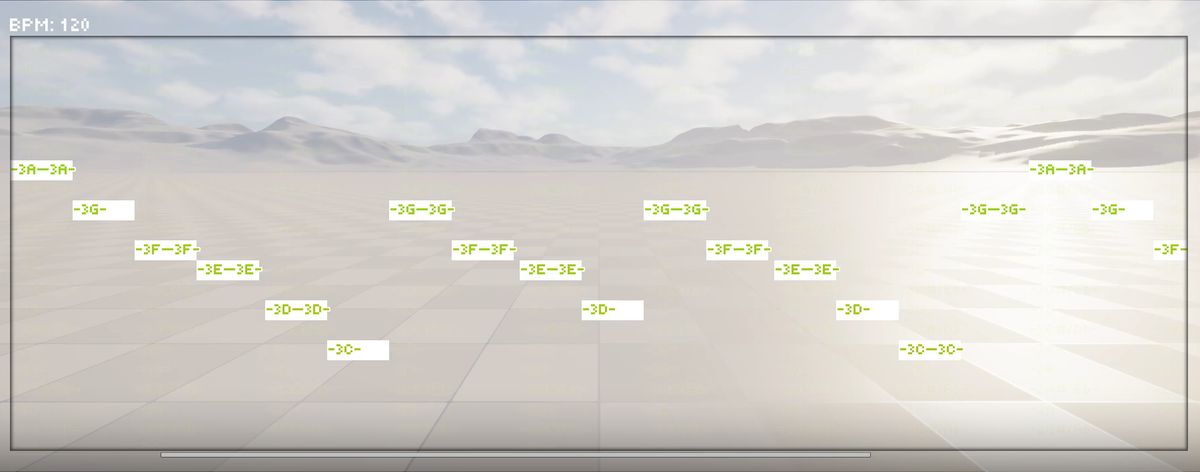

At this point I'd also been playing quite a bit with how to draw the scheduled notes. I ended up building several widgets nested inside each other in a couple of scroll bars, so you have this giant note wall. Of course, it's hard to tell which note specifically is playing, at a point, so I visually toned it down to the played notes & added some scrolling effects to keep the note playing visible.

It's still quite a work in progress, between the various parts of what's standard in MML and how I want to interact with and turn it into an actual game of some sort, but I think it's a pretty data-flexible baseline to start with.

Anyway, that's all for now!